Implementing the mobile continuing medical education (mCME) project in Vietnam: making it work and sharing lessons learned

Introduction

In order to improve the quality of its clinical workforce, in 2009, the National Assembly of the Government of Vietnam passed the “Law on Examination and Treatment” (LET) (1). The LET required, among other things, that all health care providers (doctors, nurses, and community health workers) be licensed, with licensure maintenance contingent on regular participation in accredited continuing medical education (CME) activities (2). This presented an immense challenge: it mandated the creation of CME content and a CME delivery system that tracked registered users’ compliance and performance over time in a setting with limited financial resources to achieve these goals. In this context, distance learning emerged as the preferred strategy due to its perceived ability to reach large numbers of geographically-dispersed practitioners at lower costs and disruption compared with traditional in-person CME workshops (3).

Multiple pilot studies have employed novel mobile applications to send SMS messages delivered via mobile phone as a training tool for healthcare workers, with mixed success (4-10). SMS messaging enables distance learning to a far wider range of end-users, and provides the means to monitor participation through participant response, also via SMS or through internet based linked applications.

Methods

The growing use of new SMS technologies and Vietnam’s CME challenge converged to create an opportunity to design and test a mobile CME (mCME) project. Funded by the Fogarty International Center at the US National Institutes of Health (1R21-TW00911), and in partnership with colleagues at several institutions in Vietnam, including the Vietnamese Ministry of Health (MOH), we developed and implemented an SMS-based mHealth CME intervention, which was tested and revised over the course of two randomized controlled trials (RCTs) conducted between 2014 and 2017.

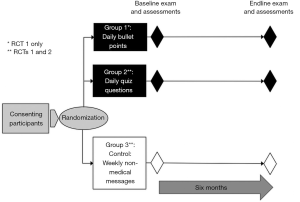

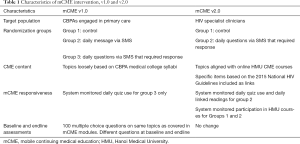

The first RCT (mCME Version 1.0), conducted from May to November 2015 in the northern province of Thái Nguyên, tested whether an SMS-based educational intervention could improve medical knowledge among 638 Community-Based Physician’s Assistants (CBPAs). Participants were randomly assigned to one of three arms, all of which involved receiving regular SMS messages over a 6-month period: (I) control (weekly text with non-medical content); (II) intervention arm 1 (daily text with a multiple-choice question pertaining to various domains of primary care practice, which participants answered, with an automated reply indicating whether the answer was correct; or (III) daily text on similar topics as the quiz questions but written as a fact (Figure 1). We found that this approach was well-accepted by CBPAs and technically feasible but failed to improve medical knowledge as assessed by examination scores (11).

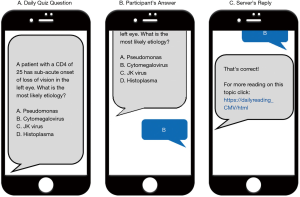

From analysis of qualitative and quantitative data from mCME v1.0, we identified and corrected several key limitations in the original design (12), which significantly informed the development of mCME v2.0. This was evaluated in a second RCT conducted with 106 Vietnamese HIV specialist clinicians from three provinces in Northern Vietnam (Thái Nguyên, Hài Phòng, and Quàng Ninh) from November 2016 to May 2017. Participants were randomly assigned to 1 of 2 arms, either a control arm (weekly text with non-medical content) or an intervention arm 1 (daily text with a multiple-choice question pertaining to selected topics in HIV care, which participants answered, with an automated reply indicating whether the answer was correct (Figure S1). These modifications proved successful and led to important gains in subjectively and objectively measured medical self-study behaviors, and a significant improvement in medical knowledge compared with the control group (13).

The goal of this companion report is to explore key elements of the project’s design and implementation that we believe will be helpful to others interested in generating evidence on the effective provision of programs similar to ours across a broad range of clinical domains. It aims to provide a detailed description of the development of the software architecture that delivered the intervention, the development of educational content both for the intervention and evaluation, and the implementation of the exam process to provide a balanced assessment by which to measure intervention impact. As a synthesis, we discuss what we believe are the key lessons learned from the mCME project.

Results

Phase 1: creation of mCME v1.0

Essential partnerships

The success of the mCME project critically depended on the partnerships established between BU and individuals and organizations in Vietnam. For mCME v1.0, we established a collaborative team consisting of members from Boston University School of Public Health (BUSPH), Pathfinder International, Inc. (PII), the information technology team within the Center for Population Research Information and Databases (CPRID), a branch of the Vietnamese MOH within the General Office for Population and Family Planning (GOPFP), and local implementing partners at the Thái Nguyên Provincial Health Department. The content development process was conducted jointly by individuals at Boston University School of Medicine (BUSM), BUSPH, Hanoi University of Public Health (HUPH), and Hanoi Medical University (HMU). The research team at BUSPH and PII developed the methodology for the administration of the exams, while partners in Thái Nguyên and at Pathfinder International managed the onsite consent, system registration, onsite SMS testing fidelity, and examination oversight processes.

SMS software system development

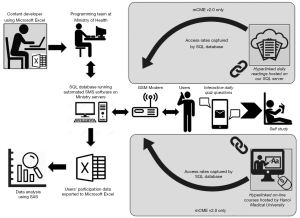

Several factors influenced the initial development and implementation of the SMS software used in the intervention including: wide geographic spread of the CPBAs; lack of internet access at the Commune Health Centers (CHC) where they practice; and the collaborative work of multiple CBPAs working at individual CHCs. Due to geographic constraints and lack of internet access, a software program that could repeatedly and reliably send daily messages, track response rates from participants, and send appropriate follow-up responses was needed. Additionally, because mCME v1.0 included participants who still used feature phones, we were constrained by the 160-character limit for a text message. The project absorbed all costs for messages sent by the participants in response to daily messages.

The architecture for the SMS delivery system is summarized in Figure 2. Briefly, the SMS system was programmed using a Structured English Query Language (SQL) database that interfaced with a Global System for Mobile Communications (GSM) modem (see previously published articles for more detail) (11,13). The SQL data base was programmed to store participants’ identification numbers, phone numbers, and randomization status, as well as the CME content to be delivered. For mCME v1.0, the SQL database was programmed to send three sets of SMS, depending on participant randomization, and to be able to respond appropriately to replies sent by CBPAs. For example, group 2 participants who received quiz questions would send an “a, b, c, or d” response; the SQL database determined whether that answer was correct, and provided a response either congratulating them for a correct answer or providing the answer for incorrect responses. During the 6-month intervention, group 2 participants’ behavior was tracked through their interaction with the system, including rates of completion of daily questions, correct answer response rates, and rates of non-conforming answers. The GSM modem allowed data transmission from a PC or laptop computer to cell phones using a wireless transmitter, transforming the computer into a system that broadcasted and received SMS to and from mobile devices. These data sets could then be exported as Excel files for subsequent analysis using SAS.

In addition to writing the technical program for the intervention, CPRID’s team hosted the intervention using MOH servers. The main advantage to developing the code de novo was that the technical expertise for operating the system was housed within the MOH creating local ownership and facilitating future use.

Content development

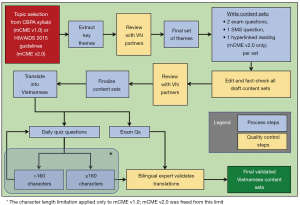

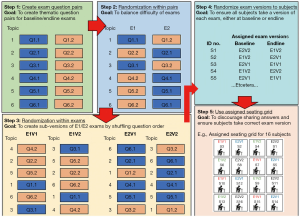

The SMS content focused on the specific medical knowledge required of CBPAs, extracted from the Thai Nguyen Medical College’s CBPA curriculum. Daily quiz questions and exam questions for baseline and endline assessments spanned thematic content sets across six topic areas (surgery, internal medicine, pediatrics, infectious diseases, sexually transmitted infections, and family planning). The content development process is summarized in Figure 3. For mCME v1.0, we created the content in sets of three: two exam questions and an SMS question, all focused on the same topic, but as discrete, distinct questions. The reason that the questions all differed is that we were less interested in whether participants could memorize the answers to SMS questions. Rather, we wished to determine if participants were acquiring new information beyond the quiz questions themselves, a process we termed “lateral learning”.

Assessment and evaluation procedures

All participants completed a baseline medical knowledge examination at enrollment, specific to their area of knowledge as trained CBPAs. The 100-item multiple-choice question examination focused on the same topics delivered to the intervention groups during the intervention period. At endline, participants again completed a 100-item medical knowledge exam consisting of different specific questions but covering the same topical areas. A significant challenge was ensuring equal difficulty of the baseline and endline examinations to avoid mis-interpretation of any changes observed in scores on the two exams. To resolve this, we created two exam questions on each topic (as described above) and randomly determined assignment to exam version within each pair. To further guard against potential bias, we randomly assigned participants in a 1:1 ratio to take either exam version 1 at baseline and exam version 2 at endline, or visa-versa. This guaranteed that an equal number of participants completed each version either at baseline or endline, that participants ultimately saw the same questions, but that statistically, the difficulty of the baseline/endline examinations was balanced through randomization (Figure 4).

Individual performance on baseline and endline exams was important to assess. However, CBPAs often work collaboratively, and despite having exam proctors present, we feared that it would be difficult to prevent CBPAs from sharing answers during the examinations. We therefore created sub-versions of the v1.0 and v2.0 exams (i.e., v1.1, v1.2; v2.1, v2.2) by randomly changing the order of the questions (but keeping all the same questions), and then seating the CBPAs according to a grid such that no individual was adjacent to another taking the same examination version (Figure 4).

Phase 2: development of mCME v2.0

Having established a research methodology, a robust software system, and a strong team, and having gained experience in the content development and outcomes evaluation processes, implementation of mCME v2.0 was far simpler than for mCME v1.0. Even so, adapting our intervention, developing new content, and managing the clinical trials operations themselves required a highly structured project management process. We also note that funding for mCME v2.0 was contingent on shifting the focus of the project to HIV/AIDS. Accordingly, the clinician participants for the second RCT were recruited from HIV clinicians who had completed MOH-sponsored HIV/AIDS training with specialist certification and were working in one of three rural provinces: Thái Nguyên, Hài Phòng, and Quàng Ninh. Inclusion criteria for mCME v2.0 included ownership of a smartphone and willingness to participate in study activities. Thus, while there was wide variation in skill and knowledge among the CBPA participants in mCME v1.0, mCME v2.0 engaged a group of specialist clinicians exclusively focused on HIV care.

Further, for implementation of v2.0, we established additional partnerships with the Vietnam Administration for AIDS Control (VAAC), the branch of MOH that is responsible for HIV treatment and care and workforce training. Additionally, after Pathfinder International closed its Vietnam operations in 2016, their Vietnam Country Director, Dr. Ngoc Bao Le, formed a new non-governmental organization, Consulting Researching on Community Development (CRCD), which was staffed by many of the administrative team from Pathfinder. Thus, the change in implementing partner between the two trials was mainly a change in title since we continued to work with the same individuals.

Changes to content delivery

Mixed methods data from mCME v1.0 significantly informed a series of modifications to mCME v2.0 (12). These are provided briefly in Table 1 and described below. In mCME v1.0, there was no attempt to connect users with technical information to engage lateral learning, assuming that the CBPAs would have access to a CBPA syllabus, textbooks, or other reliable resources. Qualitative data confirmed that the CBPAs usually had access to such materials, but they rarely used them, relying instead on consultation with colleagues or Google searches (11,12). Therefore, in mCME v2.0, we sought to make this connection easier for participants by including hyperlinks to two specific sources of technical materials: (I) the 2015 HIV/AIDS National Guidelines for Vietnam (14) and (II) existing, on-line CME courses on particular HIV topics hosted by HMU. For the former, we provided daily hyperlinks to written material as part of the SMS response to participants’ answers to daily quiz questions. When the participant clicked on a link, it provided an SMS with 2–3 paragraphs of detailed technical information aligned with the same material in that daily question (Figure S1). The links to on-line courses, delivered at the beginning of particular topic content modules (see below) connected participants to a relevant HMU course typically consisting of a video-taped lecture in MPEG format, a set of linked readings in PDF format, and an end- of-course on-line quiz, distinct from the daily SMS quiz questions.

Full table

The topical content in mCME v1.0 for the daily messages shifted each day in random order. This was strongly critiqued by the CBPAs as being confusing and something that made it difficult to gather momentum around mastering a particular topic. In response, the content for v2.0 was organized into 15 modules centered on a particular topic or theme of HIV care, each one an already-existing on-line CME course available through HMU (Table 2). Each theme/topic was delivered over a 1–3-week period depending on the extent of the content covered in each particular course. These content areas and HMU courses were selected by consensus of the investigators in Boston and Hanoi as being most important and relevant to HIV clinical care in Vietnam. Finally, because the second trial utilized smart phones, there was no requirement that the SMS messages be limited to <160 characters. All other elements regarding content delivery for mCME v2.0 were identical to those used in v1.0 (Figure 3).

Full table

Distinct from mCME V1.0, we also attempted to include feedback messages to motivate users. This was done by programming the SQL database to automatically tabulate the correct answer rate for each user at the end of each thematic module, and then to provide their performance along with the average of all participants on that module. In this way, the participants could immediately see their performance and compare themselves with their peers.

Changes to assessment and evaluation procedures

The daily technical readings were nested within the SQL database just as for the daily quiz questions and uploaded to the SQL database using Microsoft Excel as the source document, making this modification fully internal to the SMS software system. Data about participant “clicks” on the technical hyperlinks were received by the GSM modem providing detailed data regarding the extent and timing of participant’s interaction with the mCME v2.0 system throughout the study.

Similar to mCME v1.0, all participants completed medical knowledge examinations at baseline and endline, though the content was appropriately shifted to focus on knowledge specific to HIV clinicians. While the specific questions were not repeated from the baseline examination, the thematic areas were the same and covered the same material introduced during the intervention, with the same methods employed to prevent collaboration or cheating during examinations. Most other evaluation procedures, including qualitative investigations at endline, were substantially the same across both phases of the mCME Project, as well as the tracking of participants’ behavior during the intervention, including rates of completion of daily questions and the correct answer response rates.

For the second trial, we had access to two additional sets of participant data (Figure 3): (I) their use of the daily technical reading hyperlinks; and (II) their use of and activity within the HMU courses. The former was captured within the SQL set automatically whenever a participant clicked on a daily hyperlink reading. At enrollment, all participants, whether control or intervention, were provided with a unique study ID number and then collectively instructed to enroll into a study-specific version of the HMU site that included only the 15 HMU courses aligned with the mCME modules (the full HMU CME site includes over 50 current HIV CME courses). The participants logged in using their study ID numbers as their user names, and we were able to access when and how often participants logged into each course, and what content they accessed within each CME course (video, readings, quiz). This provided us with objective and time-specific data about the participants’ study habits throughout the study. Some of these data have been published previously (13); additional analyses are ongoing to improve our understanding of participants’ study habits.

Discussion

Lessons learned

The ultimate success of the mCME project was critically dependent on the strong collaborative team established early on, and on our ability to harvest data from mCME v1.0 through quantitative and qualitative methods to identify and adapt specific intervention features. This project overall demonstrates the importance of aligning with policy priorities in local settings. For example, there was a real need to improve CME in Vietnam, and testing an approach that provided a practical, feasible, acceptable way to do that was relevant to the local context. Arguably, the most important aspect was close engagement with the Vietnamese MOH at every stage of the mCME project, from planning, implementation, assessment, analysis and reporting of results. This established ownership of the SMS code and maintenance of the mCME intervention by the MOH, which has the authority to implement a future scaled-up version of mCME in Vietnam, while ensuring the technical support capacity and experience to support such an intervention over time.

While this is a key message for any public health intervention, further lessons learned are described in six thematic areas below:

First, the value of a mixed methods approach, and the opportunity for participants themselves to inform further development cannot be overstated

The collection of qualitative as well as quantitative data from v1.0 participants allowed us to identify elements of the intervention that worked and those that did not to make informed adaptations to improve the system. For the qualitative study, our sample selection was purposive based on participants’ high and low uptake of the intervention (12). This allowed us a comprehensive view of the experiences of different participants.

The importance of building a project around a theory-informed logic model, to map inputs/outputs/mediating processes

This too can serve as a case study, but one that is cautionary. Indeed, we must admit that our initial work was not formally structured around an a priori logic model, and it should have been. Rather, the failure of mCME v1.0 forced us to deduce a logic model after the fact and then to use that to develop the design of mCME v2.0. While the end result was ultimately a success, if we had the opportunity to do it all over, we would have been deliberate about using a theoretical framework and conceptual model from the start. That applies also to the behavioral change model, which we realized after the fact was quite analogous to the Health Belief Model (15), albeit within a pedagogical context.

Ownership/expertise in software platform

In our proposal to NIH, we had planned to use an existing commercially developed SMS system to deliver the intervention, but ultimately chose to build our software de novo. Without a formal comparison, we cannot judge which of these would have been better in terms of performance and features. What we can say is that our system performed as intended and was simple to deploy and inexpensive to develop. More importantly, the programming expertise was housed in the Vietnamese MOH and the intervention operated on ministry servers. In our view, this was a huge advantage because it created local ownership of the system, and internal expertise in developing and maintaining it. As described in another report we only had 3 dropped messages in mCME v1.0, and one misclassified question answer in mCME v2.0. It also allowed us to easily re-design the system for mCME v2.0, and has set the stage for expansion of the mCME approach nationwide, again working in close partnership with the ministry. Having the software and organizational memory embedded within our in-country partner built trust and local capacity, which may ultimately dictate whether the strategy can be brought to scale in Vietnam.

Aligning content to population

Given the disappointing results with no effect on either self-directed study behaviors or improved knowledge in v1.0, the lack of opportunities for “lateral learning” was a crucial aspect of planning for v2.0. The content for v2.0 was primarily based on the Vietnamese 2015 National HIV Guidelines, which in its scope and detail was far more comprehensive than the CBPA curriculum loosely adapted for v1.0. The opportunity to provide specific hyperlinks to targeted material from the national guidelines relevant to the topic module via the SMS intervention (particularly intended if the participant got the daily question incorrect) was a significant advantage over v1.0, as was the ability to curate specific HIV topic modules based on the existing HMU CME curriculum. Given that HMU CME courses were online and accessible to all participants, v2.0 already had a “built-in” mechanism for providing further self-study materials (as well as a built-in mechanism to monitor self-study, given the unique online log-ins provided to each participant in v2.0). We believe that the hyperlinks provided in v2.0 reduced the “activation energy” required to search for self-study materials—whether written, static reference guides, or the HMU CME lectures. Understanding the learning needs of the target population is critical.

Making learning easier and more interesting is important

We learned important things from the qualitative work in v1.0—that users like interaction and feedback, and that they lacked reliable information sources. For v2.0, we built on that knowledge by trying to keep users interested with more feedback (the competition aspect of the feedback on module performance relative to peers). We also tried to give them easily-accessible information through links to readings and online courses. The lesson: understanding what your target population needs and likes can help you design a high quality and well-received system. This will vary from place to place. In v2.0, participants in both groups were provided with their average performance on the daily questions as well as the average for all users each week. The ability for participants to assess their performance relative to their peers can serve as motivation for directed self-study. While intervention participants in both versions received the correct answers if they answered incorrectly, only participants in v2.0 were provided with the weekly report of their performance.

Conclusions

The development and implementation of a successful SMS-based mHealth CME intervention to increase self-study behaviors and improved medical knowledge among cadres of healthcare workers in Vietnam evolved over two phases. While mCME v1.0 demonstrated that an SMS platform can be well-accepted, technically feasible, and significantly improved participants’ self-efficacy, only by employing lessons learned from both qualitative and quantitative methods were we able to apply modifications that resulted in both behavior change regarding self-studying behaviors and an increase in medical knowledge at the end of the intervention. Crucially, qualitative feedback from participants in mCME v1.0 was instrumental in the development of v2.0, particularly with respect to participants’ desires regarding content delivered.

Future efforts to either build or scale-up mobile-delivered CME content should take into account chief lessons learned from this effort, including the importance of: (I) selection of participants with specific scope(s) of practices and subject-specific content to be delivered; (II) the organization and sequencing of such content; (III) linking source material beyond the mobile messages that are easily accessible by participants; (IV) adult learners receiving feedback both for individual performance and to assess performance relative to their peers; and (V) ownership of the software and packaging for the ability to make iterative changes over project implementation.

While we have shown effectiveness in a step-wise approach, these studies do have some limitations, chiefly that they were performed in small, relatively homogenous populations. Proof of concept for mCME v2.0 required the use of smartphone to link to other learning materials, which may limit wider generalizability, particularly in more resource-limited settings or more geographically isolated settings. Additionally, we only assessed increase of medical knowledge at a single point in time, proximal to the end of the intervention, and the duration of effect on both studying behaviors and knowledge retention is unknown. Future considerations for larger scale-up will need to take into account appropriate monitoring and evaluation of CME over time for participants.

Acknowledgements

Funding: The study is funded by the Fogarty International Center at the US National Institutes of Health under grant 1R21-TW00911: 3R21TW009911-02S1 and 5R21TW009911-02.

Footnote

Conflicts of Interest: The authors have no conflicts of interest to declare.

References

- law on education and testing, resolution #40/2009/qh12 (2009). Available online: https://vanbanphapluat.co/law-no-40-2009-qh12-on-medical-examination-and-treatment

- van der Velden T, Van HN, Quoc HN, et al. Continuing medical education in Vietnam: new legislation and new roles for medical schools. J Contin Educ Health Prof 2010;30:144-8. [Crossref] [PubMed]

- McNabb, Marion E. Introducing mobile technologies to strengthen the national continuing medical education program in Vietnam. Boston, Massachusetts: Boston University School of Public Health, 2016.

- Alipour S, Jannat F, Hosseini L. Teaching breast cancer screening via text messages as part of continuing education for working nurses: a case-control study. Asian Pac J Cancer Prev 2014;15:5607-9. [Crossref] [PubMed]

- Armstrong K, Liu F, Seymour A, et al. Evaluation of txt2MEDLINE and development of short messaging service-optimized, clinical practice guidelines in Botswana. Telemed J E Health 2012;18:14-7. [Crossref] [PubMed]

- Chen Y, Yang K, Jing T, et al. Use of text messages to communicate clinical recommendations to health workers in rural China: a cluster-randomized trial. Bull World Health Organ 2014;92:474-81. [Crossref] [PubMed]

- Mount HR, Zakrajsek T, Huffman M, et al. Text messaging to improve resident knowledge: a randomized controlled trial. Fam Med 2015;47:37-42. [PubMed]

- O'Donovan J, Bersin A, O'Donovan C. The effectiveness of mobile health (mHealth) technologies to train healthcare professionals in developing countries: a review of the literature. BMJ Innov 2015;1:33-6. [Crossref]

- Free C, Phillips G, Watson L, et al. The effectiveness of mobile-health technologies to improve health care service delivery processes: a systematic review and meta-analysis. PLoS Med 2013;10:e1001363. [Crossref] [PubMed]

- Free C, Phillips G, Galli L, et al. The effectiveness of mobile-health technology-based health behaviour change or disease management interventions for health care consumers: a systematic review. PLoS Med 2013;10:e1001362. [Crossref] [PubMed]

- Gill CJ, Le Ngoc B, Halim N, et al. The mCME Project: A Randomized Controlled Trial of an SMS-Based Continuing Medical Education Intervention for Improving Medical Knowledge among Vietnamese Community Based Physicians' Assistants. PLoS One 2016;11:e0166293. [Crossref] [PubMed]

- Sabin LL, Larson Williams A, Le BN, et al. Benefits and Limitations of Text Messages to Stimulate Higher Learning Among Community Providers: Participants' Views of an mHealth Intervention to Support Continuing Medical Education in Vietnam. Glob Health Sci Pract 2017;5:261-73. [Crossref] [PubMed]

- Gill CJ, Le NB, Halim N, et al. mCME project V.2.0: randomised controlled trial of a revised SMS-based continuing medical education intervention among HIV clinicians in Vietnam. BMJ Glob Health 2018;3:e000632. [Crossref] [PubMed]

- Vietnam Administration for Aids Control. HIV/AIDS Treatment Guidelines 2015. Ministry of Health, Vietnam, 2015.

- Cummings KM, Jette AM, Rosenstock IM. Construct validation of the health belief model. Health Educ Monogr 1978;6:394-405. [Crossref] [PubMed]

Cite this article as: Bonawitz R, Bird L, Le NB, Nguyen VH, Halim N, Williams AL, Sabin L, Gill CJ. Implementing the mobile continuing medical education (mCME) project in Vietnam: making it work and sharing lessons learned. mHealth 2019;5:7.